The UK government has issued a stark warning to technology executives, stating they could face jail time if their platforms fail to promptly remove non-consensual intimate images. This new legal framework aims to hold companies accountable for the spread of such harmful content, a move that could significantly change the operational landscape for social media and online platforms. The proposed law will require tech companies to implement effective measures for the swift removal of these images, which often lead to severe emotional and psychological distress for victims.

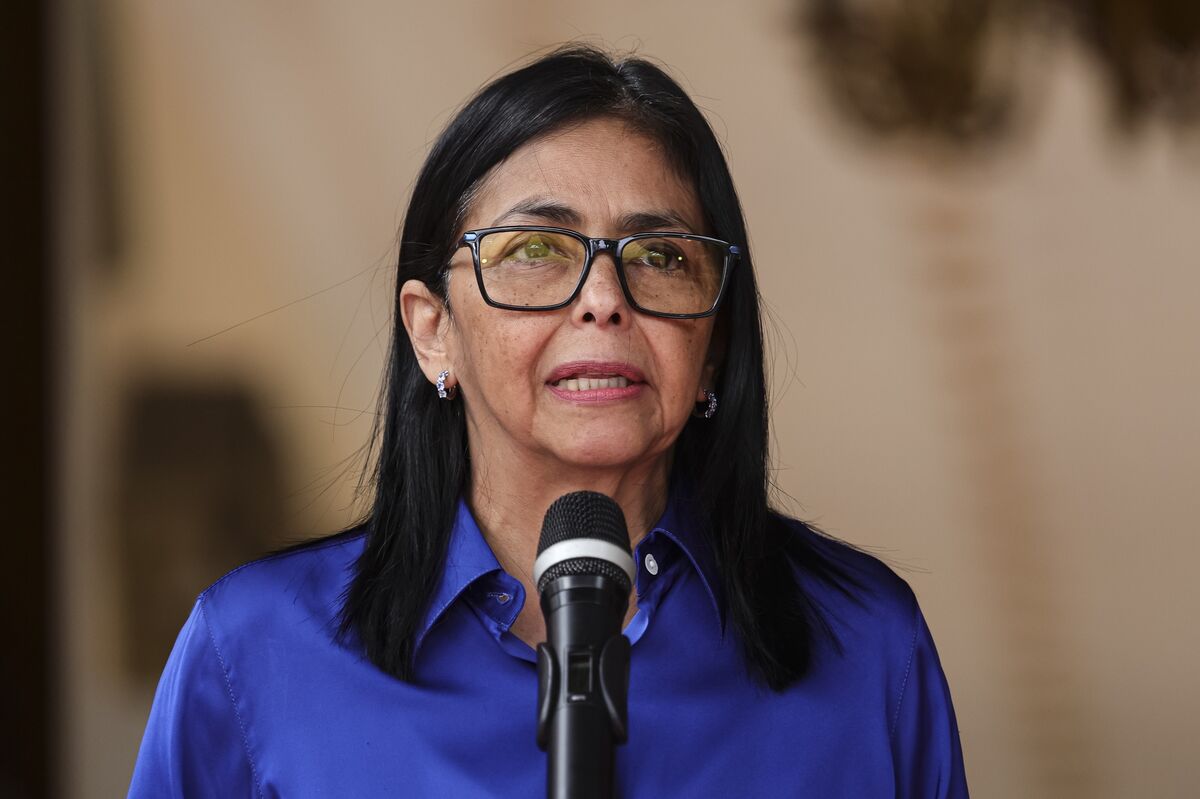

Digital Secretary Michelle Donelan emphasized the importance of this initiative, asserting that no one should suffer the indignity of having their intimate images shared without consent. The proposed legislation reflects a growing recognition of the impact of online harassment and aims to enhance protections for individuals, particularly women, who are disproportionately affected by this issue. Donelan stated, "This is about ensuring that victims of these heinous crimes are supported and that the perpetrators are held accountable."

The tech industry is gearing up for potential changes in compliance and operational practices. Social media companies will need to invest in technologies and processes that can identify and remove non-consensual images quickly. The new law is expected to require companies to report incidents of such images and actively work to prevent their dissemination. Industry representatives have expressed concerns over the feasibility of these requirements, arguing that the implementation could impose significant burdens on smaller platforms.

Under the proposed legislation, executives could face criminal charges if their companies fail to comply with the new requirements. This marks a significant shift in how online platforms are regulated in the UK, moving from a largely self-regulatory environment to one with stringent legal repercussions. Experts suggest that this could lead to a reevaluation of content moderation policies across the board, as companies will need to prioritize compliance to avoid legal liabilities.

For victims of non-consensual image sharing, this legislation could provide a sense of empowerment and justice. Many individuals have long felt that their suffering was ignored by tech companies that failed to take adequate action against harmful content.

Stakeholders, including tech companies and advocacy groups, will likely engage in discussions on how best to implement the required changes while balancing user privacy and platform accountability. As the legislation moves forward, the tech industry will need to adapt quickly to these evolving legal standards to ensure compliance and protect users effectively.

The UK government has issued a stark warning to technology executives, stating they could face jail time if their platforms fail to promptly remove non-consensual intimate images. This new legal framework aims to hold companies accountable for the spread of such harmful content, a move that could significantly change the operational landscape for social media and online platforms. The proposed law will require tech companies to implement effective measures for the swift removal of these images, which often lead to severe emotional and psychological distress for victims.

Digital Secretary Michelle Donelan emphasized the importance of this initiative, asserting that no one should suffer the indignity of having their intimate images shared without consent. The proposed legislation reflects a growing recognition of the impact of online harassment and aims to enhance protections for individuals, particularly women, who are disproportionately affected by this issue. Donelan stated, “This is about ensuring that victims of these heinous crimes are supported and that the perpetrators are held accountable.”

The tech industry is gearing up for potential changes in compliance and operational practices. Social media companies will need to invest in technologies and processes that can identify and remove non-consensual images quickly. The new law is expected to require companies to report incidents of such images and actively work to prevent their dissemination. Industry representatives have expressed concerns over the feasibility of these requirements, arguing that the implementation could impose significant burdens on smaller platforms.

Under the proposed legislation, executives could face criminal charges if their companies fail to comply with the new requirements. This marks a significant shift in how online platforms are regulated in the UK, moving from a largely self-regulatory environment to one with stringent legal repercussions. Experts suggest that this could lead to a reevaluation of content moderation policies across the board, as companies will need to prioritize compliance to avoid legal liabilities.

For victims of non-consensual image sharing, this legislation could provide a sense of empowerment and justice. Many individuals have long felt that their suffering was ignored by tech companies that failed to take adequate action against harmful content. The new law aims to create a safer online environment, offering victims more recourse and support in the aftermath of such violations. Advocacy groups have welcomed the initiative, highlighting its potential to deter future incidents.

The UK government is expected to outline further details regarding the enforcement mechanisms of this new law in the coming months. Stakeholders, including tech companies and advocacy groups, will likely engage in discussions on how best to implement the required changes while balancing user privacy and platform accountability. As the legislation moves forward, the tech industry will need to adapt quickly to these evolving legal standards to ensure compliance and protect users effectively.

Highlighted text was flagged by the council. Tap to see feedback.